Automating Least-Privilege and Data Minimization with DSPM

Why modern data security demands action, not just visibility

Henna

In May 2025, a breach at Coinbase made headlines for an uncomfortable reason. There was no zero-day exploit, no infrastructure misconfiguration, no sophisticated intrusion chain. Instead, a group of overseas support agents, people with legitimate, authorized access, were bribed to exfiltrate customer data. Nearly 70,000 users were impacted, followed by a $20 million ransom demand. (Source)

What made the incident notable was not its scale, but its simplicity. The attackers didn’t bypass security controls; they used them. Access had been granted for operational reasons and never meaningfully constrained. Once that access was abused, the damage was already done.

This is no longer an edge case. It is the dominant failure mode in modern data breaches.

Organizations today may know where their sensitive data lives. Many can even classify it accurately. Yet breaches continue because access itself has grown uncontrolled. Data is shared broadly to enable collaboration, permissions accumulate quietly over time, and access outlives both its original purpose and the people it was meant for. Visibility without enforcement has proven insufficient.

This is the inflection point for Data Security Posture Management (DSPM). Discovery is no longer the goal. Reducing risk is.

Why excessive access has become the primary breach vector

Modern data environments were built for speed, not restraint. Shared drives, cloud storage, SaaS platforms, and collaboration tools make it easy to grant access, and deceptively hard to take it back.

Over time, organizations develop a familiar pattern of risk. Shared folders are opened to “everyone” for convenience. Access is inherited through nested groups that no one remembers configuring. External sharing links persist long after projects end. Employees change roles, contractors roll off, and service accounts multiply, yet permissions quietly remain. Files that should have been archived or deleted years ago continue to sit exposed.

When an account is compromised or misused, the impact is determined not by how attackers got in, but by how far they can reach once inside. This “blast radius” expands gradually and invisibly, until a single event reveals just how much access had accumulated.

The uncomfortable truth is that this problem cannot be solved through one-time cleanups or quarterly reviews. Data is created, copied, and shared faster than any manual process can govern. Without continuous enforcement, exposure inevitably returns.

Least privilege is not restriction, it is precision

Least privilege is often framed as a tradeoff between security and productivity. In practice, it is neither. Done correctly, least privilege is about precision, not limitation.

In shared environments, least privilege means access is granted to a real person, for a defined business reason, and reviewed continuously. It means permissions narrow as roles change, expire when they are no longer needed, and adapt automatically as data sensitivity changes.

Traditional access controls struggle to achieve this. They rely on static group membership, ticket-based approvals, and periodic audits, mechanisms that were never designed for environments where access changes daily. As a result, security teams either tolerate excess access or attempt to manage it manually, introducing delays, friction, and error.

Modern DSPM must therefore move beyond identifying excessive access to enforcing least privilege continuously, without breaking legitimate workflows or overwhelming already stretched teams.

Automation without context is not enforcement

As DSPM has matured, many tools have introduced forms of automated remediation. In theory, this is progress. In practice, most automation still stops short of meaningful enforcement.

Traditional approaches tend to follow the same pattern: discover sensitive data, detect risky permissions, generate alerts, and hand the problem back to humans. Some go a step further by offering scripted remediation, but without sufficient context to act safely. Lacking clarity on who the data belongs to, how access was granted, and whether that access still serves a business purpose, automation either hesitates or acts bluntly.

The result is predictable. Over-remediation disrupts business operations. Under-remediation leaves exposure intact. Manual exceptions accumulate, quietly reintroducing risk.

Effective automation depends on identity context. Remediation must understand not just that access exists, but why it exists, and whether that reason still holds.

What identity-aware remediation looks like in practice

Consider a common scenario faced by security teams. A security officer is alerted that an employee’s credentials may have been compromised or that the employee is leaving the organization. The immediate question is simple and urgent: What sensitive data can this person access right now?

In traditional environments, answering that question requires stitching together information from multiple systems — identity providers, file servers, SaaS platforms, and data owners — often under time pressure. By the time clarity emerges, exposure may already have occurred.

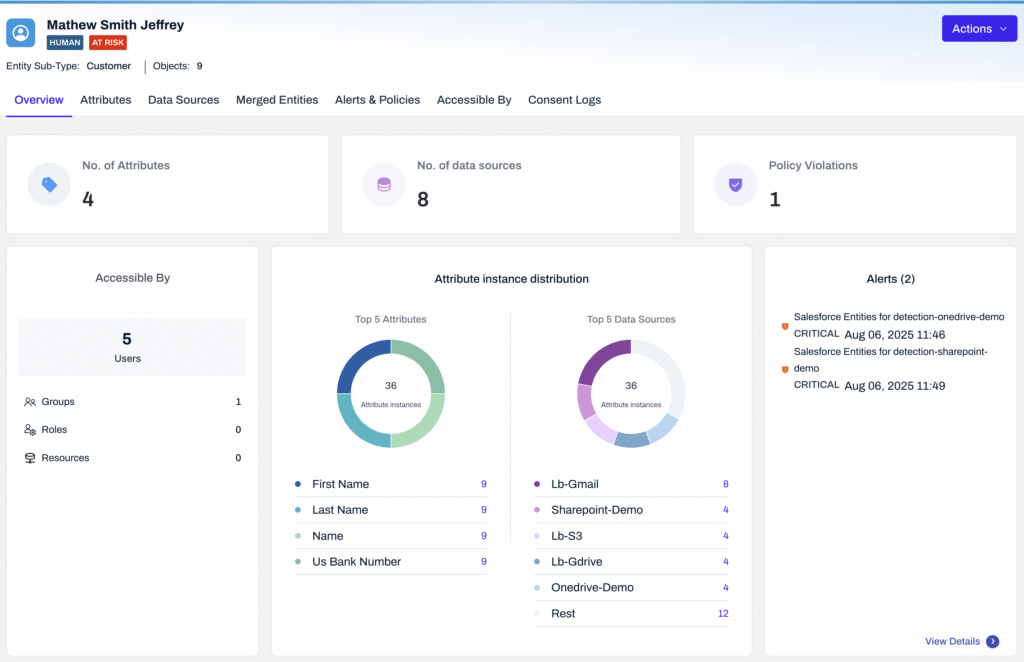

With identity-aware automation, the experience is fundamentally different. The security officer can immediately see every system, folder, and file the user can access, the sensitivity of that data, and how access was granted, whether directly, through a group, or via inherited permissions. From that same view, the officer can narrow access, revoke permissions, or trigger automated remediation. Every action is logged, attributed, and audit-ready by default.

This is where automation stops being risky and starts being reliable. Context turns enforcement from a blunt instrument into precise control.

Data minimization as a security control, not just a regulation

Regulatory frameworks like GDPR, CCPA, GLBA, and PCI-DSS all emphasize data minimization: collect only what is necessary, retain it only as long as required, and protect it appropriately. Yet in many organizations, minimization remains a policy statement rather than an operational reality.

From a security perspective, unnecessary data is pure liability. Redundant, obsolete, and trivial (ROT) data expands the blast radius of any incident. Duplicate files multiply exposure. Forgotten archives and backups quietly violate retention rules while remaining accessible.

This Insurance Company Changed How They Handle Data Retention

Modern DSPM reframes minimization as an active security control. By continuously identifying ROT data based on age, access patterns, duplication, and sensitivity, organizations can automatically archive, redact, or delete data that no longer serves a valid purpose. When minimization is enforced programmatically, entire categories of risk simply disappear.

Why this matters most for mid-size financial services firms

For mid-size financial institutions, the stakes are particularly high. Regulatory pressure increasingly mirrors that faced by large enterprises, especially under PCI-DSS and data protection mandates, yet operational capacity does not. Manual reviews, ticket-driven access changes, and spreadsheet-based audits strain limited teams and fail to keep pace with dynamic environments. What these organizations need is not more alerts or dashboards, but systems that close the loop automatically. DSPM must reduce risk continuously, not document it after the fact. This is why automation is no longer a convenience. It is a requirement.

Where Lightbeam changes the equation

Most tools treat data as static files and permissions as configuration settings.

Lightbeam treats data as relationships.

By linking sensitive content to the people it represents, Lightbeam turns governance into action. Its Data Identity Graph resolves aliases, service accounts, shared mailboxes, and nested groups into a single, human-understandable view. Security teams can see exactly who can access whose data, and why.

When policies are violated, open access, excessive permissions, external sharing, or retention expiry, Lightbeam Playbooks act automatically. Access is narrowed or revoked, data is labeled, archived, redacted, or deleted, and every action is logged against a real human owner. No tickets. No brittle scripts. No guesswork.

Access reviews become audit-ready by design. Reviewers see every internal and external identity with access in a familiar, spreadsheet-style view, with immutable evidence that regulators trust. For data minimization, Lightbeam enforces retention and purpose limitation through rule-based workflows tied to documented business processes.

This is governance that acts, not observes.

The shift that matters

The industry is moving from governance as posture to governance as proof.

In a world where access sprawl is inevitable, true control belongs to organizations that can see risk clearly and act automatically. DSPM reaches its full potential only when visibility turns into enforcement — and when policy becomes protection.

Frequently asked questions

Is there a DSPM platform that automates least-privilege clean-up on shared drives?

Yes — but only platforms that are identity-aware can do so safely at scale. Lightbeam enforces least privilege by understanding how access was granted, who the data belongs to, and whether permissions remain justified, enabling continuous and precise cleanup.

Which tools can auto-remediate open shares and exposed sensitive data?

Many tools detect exposure. Lightbeam executes remediation. When sensitive data is overshared, permissions are automatically revoked or narrowed, and every action is logged for auditability.

What DSPM platforms work best for mid-size financial services firms?

The best platforms combine deep discovery with automated enforcement and audit readiness. Lightbeam is designed to reduce risk continuously without adding operational overhead.

How can organizations shrink their data attack surface through data minimization?

By treating minimization as an automated security control. Lightbeam continuously identifies redundant, obsolete, and over-retained data and enforces deletion, archival, or masking policies without manual intervention.