What Is AI Security Posture Management (AI SPM)

A Complete Guide to Securing Copilot, ChatGPT, and Enterprise AI

Seth Knox

Artificial Intelligence has entered daily work faster than any technology before it. Employees now rely on Microsoft Copilot, ChatGPT, Gemini, and other AI powered assistants to write content, summarize documents, analyze spreadsheets, and automate decisions. This surge has happened largely from the bottom up. According to recent industry research, more than 75 percent of knowledge workers already use generative AI at work, and nearly 80 percent of them rely on their own personal ChatGPT or Gemini accounts rather than company approved tools (Microsoft Work Trend Index Report). This means the majority of enterprise AI activity happens outside official governance programs, with no visibility or security controls in place.

The implications show up every day. A financial analyst uploads a customer loan document into ChatGPT to rewrite a denial letter, instantly transferring regulated personal information outside the enterprise environment. A marketing manager drafts a presentation and Copilot quietly pulls in salary data from a misconfigured SharePoint folder because the folder is open to too many people. A developer attempts to speed up debugging and pastes proprietary source code into an unsanctioned AI tool. None of these employees intend to expose sensitive data. They are simply trying to get work done faster. But AI systems can access, analyze, and reproduce information at machine speed, creating new pathways for exposure that traditional security tools were never designed to handle.

These risks are not theoretical. Security researchers recently demonstrated that flaws in ChatGPT allowed attackers to extract other users’ conversation histories, including confidential information people believed was private. Attackers exploited prompt injection loops, data confusion across sessions, and poorly secured interface components to access content from unrelated users. The lesson was clear. When people place sensitive information into AI systems, those systems can become unpredictable leakage points. Source: The Hacker News, “5 Critical Questions For Adopting an AI Security Solution,” 2025.

At the same time, autonomous AI agents are starting to act not just as copilots but as active participants inside enterprise systems. As described in Security Boulevard, these agents ingest data in bulk, plan actions, and sometimes even execute transactions, often without meaningful oversight. They inherit powerful privileges from identity systems, yet they lack human context, risk awareness, or ethics. In one public case involving an AI driven backend, hundreds of thousands of patient records were exposed without triggering traditional alerts, discovered only during an external audit. Source: Security Boulevard, “Shadow AI: Agentic Access and the New Frontier of Data Risk,” 2025.

This combination of rapid AI adoption, widespread shadow AI usage, and agentic access defines the challenge that AI Security Posture Management is designed to solve.

What is AI Security Posture Management (AI-SPM)?

As per the Gartner® analysis in the Hype Cycle for AI and Cybersecurity “AI security posture management (AI SPM) tools help enterprises scan their infrastructure to discover AI models, assistants and agents deployed and their associated data pipelines. AI SPM evaluates how enterprise AI infrastructure creates risks when exposed to data storage and processing workloads.”

Gartner subscribers can access the full report here: Gartner, Hype Cycle for AI and Cybersecurity, Jeremy D’Hoinne, Manuel Acosta, Josh Murphy, 7 August 2025.

The purpose of AI SPM is to answer questions that traditional security programs never had to consider. Which AI models and agents are being used across the enterprise. What data they access. Whether prompts or outputs contain sensitive information. Whether training or inference pipelines use data that creates compliance or privacy risks. Whether AI deployments are configured correctly or operate outside IT oversight.

As AI adoption accelerates without centralized governance, organizations need this visibility to understand and manage risk. AI SPM provides a framework to discover shadow AI, evaluate its implications, and implement appropriate controls.

How does AI SPM differ from Data Security Posture Management (DSPM)?

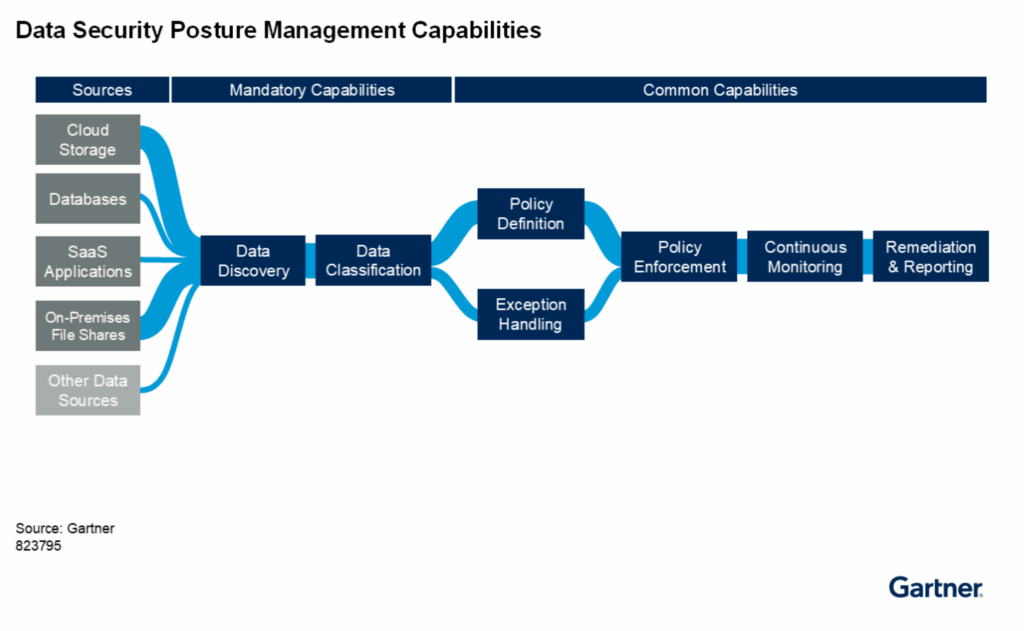

Gartner’s Market Guide for Data Security Posture Management describes “Data security posture management (DSPM) discovers previously unknown data across on-premises data centers and cloud service providers (CSPs). It also helps categorize and classify previously unknown and discovered unstructured and structured data. As data rapidly proliferates, DSPM assesses who has access to it to determine its security posture and exposure to privacy, security and AI-usage-related risks. DSPM is delivered as software or as a service.”

Gartner subscribers can access the full report here: Gartner, Market Guide for Data Security Posture Management, Joerg Fritsch, Brian Lowans, Andrew Bales, 17 September 2025

DSPM focuses on understanding the data itself. AI SPM focuses on how AI systems interact with that data.

What can AI SPM Tools Can Do To Reduce Risk?

AI SPM tools reduce risk by discovering AI systems, understanding how they handle data, correlating behavior with identity and entitlements, enforcing policy in real time, and creating an auditable record of activity. They identify unsafe prompts, risky model outputs, unauthorized data ingestion, misconfigurations, shadow AI agents, and inappropriate access patterns. Effective AI SPM solutions support policy driven remediation, restrict access, quarantine sensitive outputs, and notify teams when risky content appears in AI conversations.

How does Lightbeam Reduce AI Data Exposure Risk?

Lightbeam brings DSPM and AI SPM together through its Data Identity Graph, which maps sensitive data to the individuals it represents and those who access it. Lightbeam discovers and classifies sensitive data across Microsoft 365, SharePoint, OneDrive, Teams, Salesforce, databases, cloud storage, file shares, and many other enterprise data sources.

Lightbeam’s Copilot Sensitive Data Governance capability ingests Copilot prompts, AI responses, and any referenced files such as spreadsheets, presentations, source code, or contracts. It classifies sensitive content and maps Copilot interactions back to user entitlements so security teams can see which employees surfaced sensitive data, whether they should have had access, and how often it occurs.

When regulated content appears in a Copilot conversation, Lightbeam can trigger responses such as revoking access, disabling accounts, quarantining files, applying labels, or requiring review. Lightbeam correlates AI usage with permissions, fixes underlying access issues, and provides an end to end audit trail of prompts, responses, referenced files, alerts, and remediation actions.

Organizations everywhere are racing to adopt AI, but very few have the visibility or controls needed to protect sensitive data as Copilot, ChatGPT, Gemini, and autonomous agents enter daily workflows. Lightbeam helps you deploy AI responsibly by unifying DSPM, AI Security Posture Management, privacy operations, and access governance in one identity centric platform.

GARTNER is a registered trademark and service mark of Gartner, Inc. and/or its affiliates in the U.S. and internationally and is used herein with permission. All rights reserved.

If your organization is rolling out Microsoft Copilot or evaluating generative AI tools, take the next step with a free 30 day AI Privacy and Risk Assessment. You will see exactly which sensitive data your AI tools can access, where oversharing is occurring, and what controls you can implement immediately to reduce risk.

❓ FAQ Section

What is AI Security Posture Management (AI-SPM)?

AI Security Posture Management (AI-SPM) is the process of continuously discovering, monitoring, and securing AI systems, models, and data pipelines. It helps organizations identify risks related to AI usage, protect sensitive data, and enforce governance policies.

Why is AI-SPM important for enterprises?

AI-SPM ensures that AI tools like copilots, chatbots, and AI agents do not expose sensitive enterprise data. It provides visibility into AI data flows, permissions, and interactions, reducing the risk of data leakage or regulatory violations.

How is AI-SPM different from DSPM?

Data Security Posture Management (DSPM) focuses on discovering and protecting sensitive data across cloud and SaaS environments. AI-SPM extends those capabilities to AI systems, securing prompts, training data, model outputs, and AI workflows.

How does LightBeam support AI-SPM?

LightBeam combines DSPM, identity-centric governance, and AI monitoring through its Data Identity Graph. This enables organizations to discover sensitive data, govern access, and monitor AI systems interacting with enterprise data.